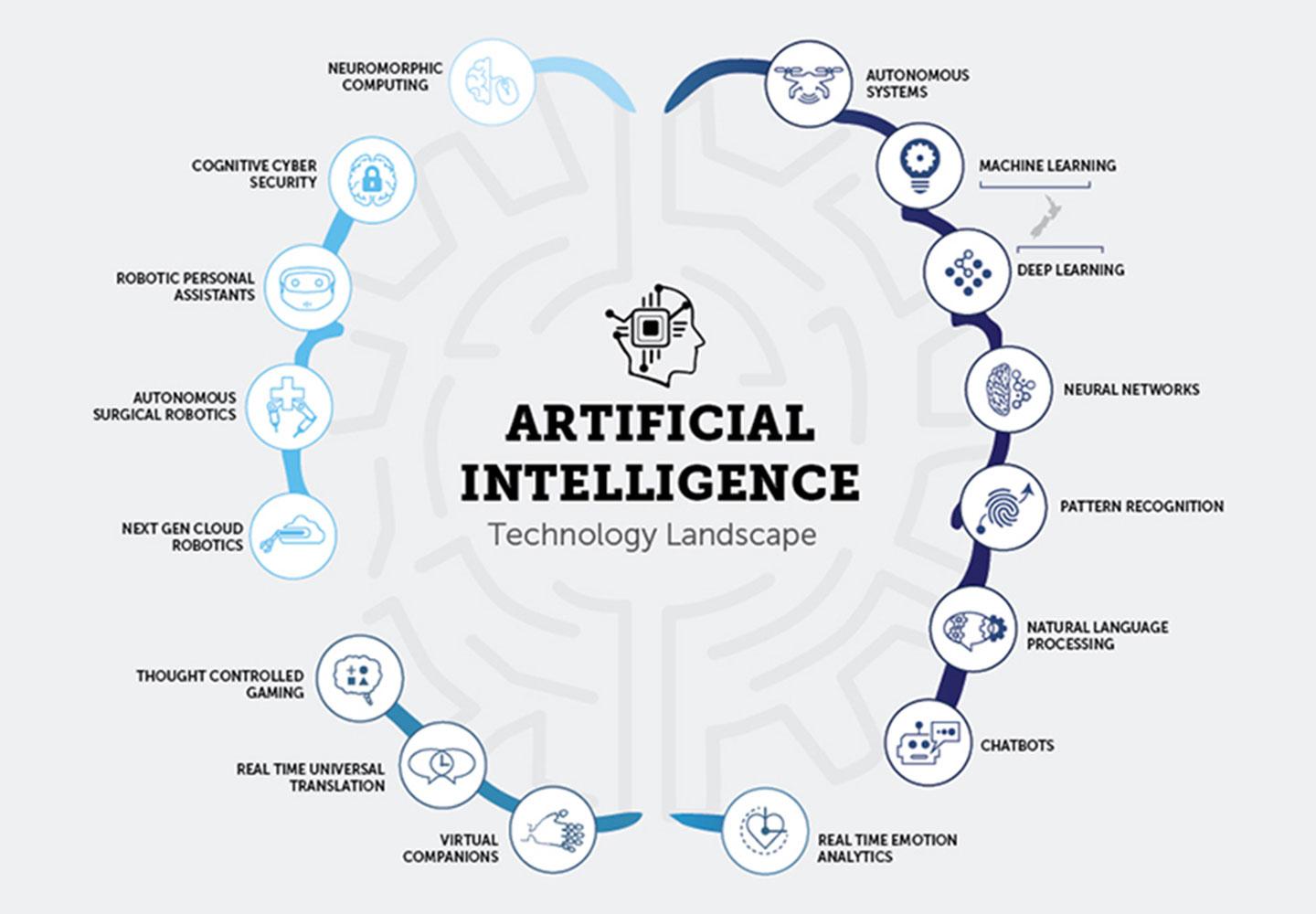

From technical capability to business leverage

As model usage expands, compute cost and serving efficiency increasingly shape product margins, customer experience, and deployment viability. ACE3 is positioned in the part of the stack where those pressures converge.

- Supports AI companies that need stronger performance from existing infrastructure

- Addresses inference, deployment, and infrastructure efficiency rather than model quality alone

- Connects research-led optimisation with practical commercial use cases

- Builds a defensible layer beneath application and platform growth